When was the last time you downloaded an executable on your laptop or desktop to access your bank account? It's shocking how much work gets done in a browser, and it really makes me scratch my head about why mobile devices have decided to create walled gardens. I mean, so much research and time goes into making browser-based apps more secure on the most invasive platform, which is your typical laptop/desktop operating system. Yes, I know that mobile apps can “do more stuff” than your typical web application, but that's just a mobile OS limitation that the vendor has chosen.

Let's be honest. Browser-based apps haven't always been secure, and even today, many still aren't. They're getting incredibly complex, which means they're not only important but they're very difficult to write because of security reasons. The browser, for all practical purposes, has become a modern operating system. Just as you launch an application on your operating system, you expect a website to give you a sandboxed environment with certain security boundaries, and you expect similar behavior from a banking app in a browser.

Browsers, even most frameworks, are pretty secure by design. There are a lot of very smart people working day and night and observing the latest hacking trends and building constant improvements into the modern browsers we use. I've never downloaded an EXE to access a bank because EXEs are notoriously hard to secure on a laptop at internet scale. This isn't true for mobile apps, which are more closely guarded. But those mobile apps are only as secure the developer authoring those apps. Browsers are quite secure, and the foundation of these security policies are origin policies.

The idea is that both you and your users can be sure that any application contained in a site, or sites you control, is unaffected by code from other sites. And you have supported and secure standards, plus tried and tested workarounds to break out of the sandbox when you need to.

Although browsers and frameworks do this quite well, it's when you start writing custom code that the problem starts. It's just so easy to introduce really dangerous anti-patterns. I thought it would be good to jot down some thoughts around browser app security and origin policies that we should all know about, so here's the article.

You'll see that the industry has put a lot of thought into making browsers more secure. Let's see how.

What's an Origin?

A typical URL looks like this: https://subdomain.domain:port. For instance, consider: https://images.myfancysite.com:443. There are four distinct parts here, the protocol, which, in this case, is https. The subdomain is, in this case, images. The domain is myfancysite.com, and finally, the port is 443.

For instance, given the above example, https://images.myfancysite.com:443/somedir/anotherpage.html constitutes the same origin. But http://images.myfancysite.com constitutes a different origin because the protocol is different. Or, for that matter, https://images.myfancysite.com:444 is also considered a different origin because the port is different. And yes, https://videos.myfancysite.com:443 also constitutes a different origin because the subdomain is different.

Why does same origin matter? The same origin dictates how information is shared between documents. By default, a script from one origin is prevented from accessing information on another origin. This means that a page from images.myfancysite.com is, by default, prevented from accessing information from videos.myfancysite.com or www.myfancysite.com. Note that www is just another subdomain; it just happens to be, by convention, the default domain of most websites for mostly historical reasons.

The web is a strange place, and application developers choose exceptions to these rules all the time. For instance, Internet Explorer has a concept of Trust zones, so, for instance, all domains in the corporate intranet zone are considered safe, and the same origin policies aren't applied. This has caused a lot of heartache in moving to the cloud. Similarly, Internet Explorer doesn't include ports in the same origin checks. Anyway, who uses Internet Explorer anymore?

Let's understand a few common attacks that same origin policies can keep us safe from, and some other protections that are built into modern browsers.

Cross-Site Scripting (XSS) Attacks

If you read the previous section where I described what an origin is, you might be confused. If one origin isn't supposed to access stuff from another origin, how can I reference jQuery from a CDN and use it on my page? Well, you can do it like this:

<script src="https://cdnurl/script.js"/>

In fact, countless frameworks use this technique. Like I said, the web is a weird place. And the whole internet would break if we didn't allow for well-meant exceptions.

By default, the same origin policy for scripts says that if your page references a script like that shown above, it's considered okay and it's allowed to access information, cookies, and DOM on your page.

You can imagine how powerful that is: The script basically has unfettered access to all information on your page. Therefore, you have to be absolutely sure that the script's loading all the ones you trust. One way to do that is, absolutely, to always use the scripts from HTTPS locations. Various capabilities built into the browser ensure that scripts come from a trusted source. However, it's also best practice not to load stuff from a CDN for various other reasons, security being one of them, performance being another, application errors being yet another. Therefore, many sites simply choose to bundle these scripts within their own application and load them from the same origin, thereby avoiding this problem entirely. Long story short, if you must load a script from a CDN, always do so from an HTTPS location.

If you must load a script from a CDN, always do so from an HTTPS location.

An XSS attack is something entirely different. An XSS attack is where a maliciouswebsite.com wishes to insert a script on your myfancysite.com page, and, obviously, do so without the user's knowledge. This is very easy to do by doing DOM manipulation on the page.

This means that any action you take that can perform DOM manipulation is a potential source of inserting a script element pointing to maliciouswebsite.com. As soon as a script is inserted from maliciouswebsite.com, it's game over. The attacker can now manipulate data and steal data and do so without the user's knowledge or needing any explicit actions.

Remember that as long as the script isn't explicitly requested by your page, you're safe. But as soon as your page is tricked into requesting the script, you're open to attacks.

The natural question is: What actions could cause DOM manipulation? There are many situations where you might want to manipulate the DOM. For instance, let's say that new data showed up from the server and you'd like to render it. Information presentation DOM manipulation is relatively safe, but it's when you start inserting script elements that you have to be extremely careful.

Now, you may ask, what if I never insert script elements dynamically? Alas, if only life were so simple. Frequently, we do load scripts dynamically and based on logic that keeps the applications responsive and lightweight. But it's not just script elements that are vulnerable. Events that execute on UI elements are also vulnerable, such as this:

<img src="badimageurl" onerror="runrandomscript();"/>

Or, some crafty people can also simply encode URLs like this:

<img src=jAvascript:dobadthings())>

XSS attacks can be triggered by unsanitized inputs in form fields and using statements like “eval”. An eval statement in JavaScript simply runs the string representation of code without any cognizance of what might be inside it. Because it's just a string, no framework or browser protection keeps you safe from shooting yourself in the foot. The below snippet, for instance, sends data to badsite.com without the user's knowledge

eval("sendPOSTRequesToBadSite('data');");

A best practice in JavaScript is to avoid eval. Eval is a security nightmare and it performs very poorly from a pure execution point of view also. Just say no to eval.

A best practice in JavaScript is to avoid eval.

The problem is that crafty hackers can insert evals by tricking users, typically sending an email with a tempting link, that prefills and submits the form on a legit site that they know wasn't sanitizing inputs. Another best practice is to never trust and always sanitize inputs.

Never trust and always sanitize inputs.

The important thing to realize here is that by default, browsers keep you safe from XSS attacks. The same origin policy for scripts has been designed to give you the convenience of referencing the scripts you need and yet not cause inadvertent XSS attacks. It's important that you understand how this works so you don't inadvertently break this model.

Content Security Policy

If you're left thinking that these XSS attacks are nearly impossible to 100% be safe from, rest easy. There are some things that can help you. One of them is content security policy. Content security policy is a mechanism that allows you to specify which locations your page can load stuff from. So you can simply create a locked-down content security policy that will, by default, prevent anything getting loaded from random URLs that you don't expect.

Content security policy can be specified as either an HTTP header or as a meta tag on your HTML document. As an HTTP header, the content security policy header looks like this:

Content-Security-Policy:

default-src 'self' *.goodsite.com

Or as a meta tag, it can take the following form:

<meta http-equiv="Content-Security-Policy"

content="default-src 'self';

img-src https://*;">

Not only does content security policy protect you from cross-site scripting attacks, but you can also specify via the content policy that content can only be loaded from a certain URL over HTTPS. This means that you effectively eliminate packet sniffing attacks on the specified URLs.

Pages can get complex, and frequently, a content security policy could inadvertently break your page. In development mode, you can easily debug content security policies as well. If you wish to run them in report-only mode, you can specify a content security policy like so:

Content-Security-Policy:

policy; report-uri reporting url

Content security policies are quite customizable as well. You can specify policies for fonts, images, frames, audio, video, media, scripts, and workers. For instance, perhaps you have a page on which you don't want iFrames loaded. This is how you can achieve that:

Content-Security-Policy: frame-src 'none'

Or you can have iFrames loaded from a certain whitelisted URL with a wild card, as shown here:

Content-Security-Policy: frame-src *.goodurl.com

Do note that certain pages may specify that they don't wish to be loaded as an iFrame. For instance, a login page or a banking site wouldn't want to be loaded as an iFrame. Content-security-policy does not override such behavior.

Similarly, you can have unique policies using attributes, such as connect-src to control scripts, font-src to control fonts, img-src to control images, and many more.

A great trick is to start with a content security policy of none and see what your page requires as errors in the console. Once you know exactly what your page needs, you can create a tightly controlled list of policies in the content security policy as necessary.

A great trick is to start with a content security policy of none and see what your page requires as errors in the console

Content security policies are a convenient and secure way of allowing you to write secure web applications. It's a good idea to include them on your pages.

Cross-Site Request Forgery (CSRF) Attacks

Imagine if same origin policies didn't exist and you had a tab open pointing to your banking site. Another site could issue commands to the API of the banking site. This won't actually work because the other site is prevented from making such requests due to same origin policies. CSRF attacks try to get around this by tricking the user into taking an action, one that piggybacks on an authenticated session, and then issuing such a request on the user's behalf.

Imagine that I'm a hacker and I can't insert any script onto your page because you've done a good job protecting your site. I've done a great deal of study on your API, and I've noticed that you don't log out your users properly. I can now coax the user into clicking on a link. Well actually, I can do one better, I can send the user an email with an image URL. The Image URL, when loaded, executes code on your server in your authenticated session that you forgot to log the user out of properly.

Let's reiterate this, you thought logging out users properly was unimportant or inconvenient. But I - the hacker - can now execute a URL in an authenticated user's session. Oops.

Something as simple as this embedded in an email can cause you to lose a thousand bucks:

<img src="https://yourbank/api/transfer?amount=1000&transferTo=sahilh@aXX0r"

width="0"

height= "0">

Gosh, this makes me sick just thinking about it. Have you ever received an email with images in it? Did you ever click “Load content directly” to be able to read that email? And if you had an authenticated session into any website that any image was being loaded from, you are pwn3d. Certain email clients actually show you a preview of the link when you hover over it. How do you think that preview works? It's like loading the link into an iFrame or equivalent without you actually clicking on it.

What if the image was completely hidden, as it is above, with a zero height and width? What if the email wasn't even generated by my bank, but spoofed? Remember, SMTP is a completely insecure protocol. Have you ever viewed an email with embedded images in its full glory? How are you feeling about it now?

Usually, same origin policies would have kept you safe here from the most common scenarios, but again, this isn't a guarantee. The simplest best practice is to log out as soon as you can. This is why banking apps will time you out due to inactivity. Most web applications use a session-expiring cookie to log you out. But just in case they don't, hit the log out button. And if loading the browser signs you into the app automatically, know that it's an app that is relatively insecure. Perhaps that's okay for convenience, like for email apps. But for more sensitive apps, such as banking, I'd never take such a shortcut.

Another best practice is to create CSRF tokens that are part of the HTML form, which add a non-predictable parameter such as the user's session ID using a secure hash function. Any action from the browser must include the associated CSRF token, which informs the server that this came from a legit session. However, how many AJAX applications, SPAs combined with APIs, have you seen that include CSRF tokens?

Although frameworks like ASPNET, Express.js and others will protect you from CSRF on postbacks, if you're hand-implementing AJAX requests, you need to be sure to put in the work to protect yourself from CSRF attacks. This is generally done using the X-Requested-With header.

CORS

Sometimes the web page you've loaded in your browser wishes to make requests to URLs that are other than the page was loaded from. Now why would we ever do such a thing?

Here's one obvious example of CDNs. Say you wish to load a font or a script. Or perhaps you wish to call APIs hosted elsewhere. This typically means that you'd want to do HTTP GET (or other verbs) to that alternate URL. But this just won't work. By default, a very small set of headers are allowed across origins, namely, Accept, Accept-Language, Content-Language, Last-Event-ID, and Content-Type. If that's all you could do across origins, you'd be in trouble. How would CDNs work? How could you call a third-party API? This is where cross-origin resource (CORS) comes in.

Sharing CORS is an HTTP header-based mechanism that allows a server to indicate any origins (domain, scheme, or port) other than its own from which a browser might permit loading resources. In short, CORS allows you to specify which sites you will accept cross-origin requests from. By default, browsers restrict cross-origin HTTP requests initiated from scripts unless the target site allows it.

Some content hosts want everyone to be able to load content from them. Let's say I have a font I'd like everyone to use, or I host a CDN, so anyone should be able to load content from me. But many content hosts, especially those that host secure APIs, have to be picky about who can access information from them, or send information to them. This is done by creating an Access-Control-Allow-Origin header and almost all modern web browsers have support for this.

It's these CORS policies that keep you safe from making random requests to any web API across the internet.

Now, you want to hear something scary? Older browsers didn't offer cross-origin AJAX request protection. Neither did Flash. Nor Silverlight. So, your grandparents, using Windows XP, doing banking on an older version of Internet Explorer, is a disaster waiting to happen. Apparently, they heard “Don't fix what ain't broken” without realizing it was already broken and the world just hadn't gotten around to fixing it.

As a best practice for the average user, always keep your browser updated.

Developers frequently use browser extensions to get around access-control-allow-origin-header. Remember that these CORS policies are enforced by the browser, so if the browser somehow injects this header into all incoming HTTP responses, you can defeat this protection.

So although good CSRF protection is still necessary, as a developer, if you have such an extension enabled, use it for nothing else other than development purposes.

Enforce HTTPS

There used to be a time, not too long ago, when many sites were just plain old HTTP. Imagine your favorite news site being HTTP. Not a big deal, right? Except someone could snoop in on the traffic, modify it, and spread fake news. Kinda funny that news sites have all upgraded to HTTPS, so they have to do all that fake newsing themselves now, but that's beside the point.

The real issue is that when I open my browser, I need to be confident of two things. First, that I'm talking with who indeed I think I'm talking with. Second, that nobody is reading or modifying the data between me and my intended recipient, both the data I'm sending, and the data I am receiving.

Such is the magic of HTTPS. The way it works is that you have certificate authorities. One certificate can be trusted by another certificate, which eventually goes back as a chain of trust to a certificate authority. Computers that you buy from any vendor have an operating system on them, such as macOS, Windows, or Linux. And they will have certain CAs they trust. In corporate environments, your admin may choose to add corporate CAs to that list also. Now, if you visit a site, that's a certificate trust chain that goes to a CA that your computer trusts, and then you trust the site's certificate.

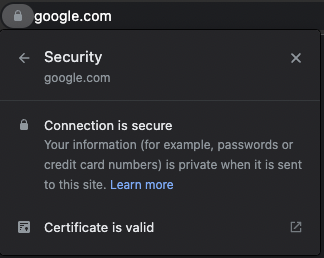

Modern browsers inform the user via a UI element, typically next to the URL, whether a certain site they're visiting has a valid HTTPS certificate or not. An example of this can be seen in Figure 1.

There are a few takeaways here.

First, get in a habit of always glancing at that lock on any URL. If the browser is telling you that a certain URL looks fishy, stop. Don't use that site.

Second, HTTPS is used in browsers, and browsers do a great job of informing the end user of the health of that HTTPS certificate. But native apps, such as mobile apps on your phones, frequently also target HTTPS URLs, such as interacting with web APIs. Or they may embed web pages and not show you the URL. Be very wary of such apps. There's a lot of Google-driven development where developers copy-and-paste code to make stuff work. And a common error is to just ignore HTTPS errors because it makes life convenient. Unfortunately, you, as an end user of the app, have no idea or no way of telling how well the app is written. This goes back to whether you trust the app developer to have done a good job or not.

Do you still feel that mobile apps are more secure than well-written browser apps?

Finally, there are https://www.google.com and g00gle.com. They are different, but HTTPS doesn't care. Nobody's stopping a hacker from buying g00gle.com and getting an HTTPS cert for it too. This becomes a real issue when you start using multilingual web addresses, where the URL itself can be written in Unicode. For instance, ?pple.com and apple.com (yes you read that right) are two different URLs. The first one, has “?” written in Unicode. Still don't believe me? Visit https://?????.com and tell me what you see. What's quite worrisome is that in such cases, there's no way a user can casually look at a URL and tell if it's legit or not. And that the HTTPS certificate for the URL looks okay too. Truly scary stuff. And orgs have no recourse to this except to play this constant cat and mouse game of trying to snitch out such nasty websites. Or you can have antivirus or similar measures, but it's still a cat and mouse situation, and hackers have an easy way out into tricking users. And they do.

So how would you protect yourself from such a phishing attack where the URL looks legit? Well, I worry about such stuff, so if there's a URL that I really care about, I type it. I don't click on a link in an email, I type it. If the URL is complex, I'll remove Unicode policies by saving it as ASCII. Okay I'm paranoid, but how do normal users do this?

Have you ever clicked on a link on a bank URL in an email? And then looked at the green padlock to reassure yourself that it was all fine? If you don't even look at the green padlock, I don't know what to say. But even that green padlock isn't a guarantee of 100% safety.

Now you may ask, can a native app have such an issue? Well, first of all, for various reasons, native apps for secure stuff on desktops is a pipe dream at internet scale. But on phones, I can't pretend to be Bank of Wadiyashire and register an app. Both Google and Apple prevent me from doing so. So I guess this is where native apps and walled gardens win. Yay! But native apps have numerous other issues, so it's not a clear white or black situation here.

This is the challenge with security. It's a never-ending game. But HTTPS is the best we have today and so you must use HTTPS. Not only will it offer you authenticity of the site you're talking with, but also a guarantee that nobody is snooping on the data.

This would be a good time to mention that certain governments and organizations install certs on all computers allowed in a country so they can see this HTTPS traffic. So then you know such a cert is in place. Again, I don't think the average citizen knows, but still.

Browsers will also, by default, not allow mixing HTTP content with HTTPS content on a page. This is generally a good idea because, imagine if you were to allow an HTTP script (which is tamperable) to be loaded on an HTTPS page. Now, via that script, the entire page is compromised.

My point of this long tirade is: always use HTTPS. There is, in fact, a content header that encourages the use of HTTPS. And it looks like this,

Strict-Transport-Security: max-age=<expire-time>

Strict-Transport-Security: max-age=<expire-time>; includeSubDomains

Strict-Transport-Security: max-age=<expire-time>; preload

The way this works is that if you request a site for the first time on HTTP, it loads that site on HTTP. But if you ever access this site on HTTPS, and next time you visit the site on HTTP, it automatically upgrades you to HTTPS. The max-age parameter is time in seconds that the browser should remember that a site must only be accessed via HTTPS.

The includeSubDomains attribute is optional, and specifies if this applies to all subdomains of the site. The preload attribute is used to inform the Chrome browser that certain sites must only be HTTPS. This is a list that Google maintains. (Google maintains it, all browsers use it.)

Communicating Between Browsing Contexts

Browsers, by default, give you separate browsing contexts. This means that my banking site can't communicate with my social media site in another tab. This is because both of these sites in their own tabs, opened independently, have separate browsing contexts.

There are times when you'll want to break this rule. For instance, you want to load an iFrame or pop open another tab for the user to finish their work and communicate the result back to the original site. There are standard ways of bridging this communication, and the golden rule is that the communication can only occur between windows where one window has spawned the other window.

At the heart of this are two methods on the window object. The postMessage method and the addEventListener method. The postMessage method sends a message and the addEventListener method reacts to the message. Here's how it works.

First, let's say you're on a web page and you spawn a window, a bit like this:

const popup = window.open(/*..*/);

Now, to send a message to this pop-up, you can use the next code snippet in the main (opener) page.

popup.postMessage("secret message", "targetorigin");

However, the above method won't have any effect because the called page (pop-up) isn't yet listening. To do so, in the pop-up, you'll need to add an event listener like this:

window.addEventListener("message", (event) => {

if (event.origin !== "..")

return;

// do stuff

}, false);

The important part here is checking for event.origin: Does the called page trust the caller? In fact, there are a few key security related points here.

First, don't add addEventListener if you aren't expecting to hear anything. For instance, only add event listeners if you were spawned.

Second, always check origin in the iFrame or pop-up, but remember this is a client-side check, so you can't really trust it. Users can twiddle certs and hosts files to fool your code. Now that requires admin rights to the user's computer, so it's not something that is easy to spoof. But still.

Third, don't use wild-card origins, narrow it down to exactly what you need and expect.

One additional way you can lock this down further is by using SharedArrayBuffer. Here's an example.

const sab = new SharedArrayBuffer(1024);

worker.postMessage(sab);

The SharedArrayBuffer object is used to represent a generic, fixed-length raw binary data buffer that can be used to create views on shared memory. In 2018, there was a security vulnerability called Spectre, where processors that perform speculative execution (which is nearly all modern processors) may leave observable side effects that could reveal private data to attackers. If you remember, there was a big noise around major microprocessor manufacturers, who then had to redesign their processors while taking a severe performance hit to address this issue.

Since then, browser vendors have come up with a new and more secure approach to re-enable shared memory. This requires developers to consciously take some security measures, which if you don't, postMessage API throws exceptions.

The measures are pretty simple, in the top-level doc (the spawner), you need to set two headers to cross-origin isolate your site.

The first is the Cross-Origin-Opener-Policy with same-origin as value, and which protects your origin from attackers. Second is the Cross-Origin-Embedder-Policy with require-corp as a value, which protects victims from your origin.

Now in your spawner and spawned windows, you can easily use SharedArrayBuffer as shown here:

if (crossOriginIsolated) {

// Post SharedArrayBuffer

} else {

// Do something else

}

Cookies

Cookies, as you know, are small bits of information that sites can put on your computer so they can remember stuff about you. This could be something like you searching for “How is social security taxed,” and whichever site you searched this on places a cookie, which can then be read by YouTube to show you ads about “comfortable underwear that does not leak”, or “weight loss plans for women over 55.” Yes, that actually happened to me. I feel so violated. Such is the world of advertising we live in.

When a site sets a cookie, it can specify who can read the cookie. It's either the domain itself, or the domain, parent domain, and subdomains. But subdomains can't set or read cookies on a root domain that's part of the suffix list. For instance, if I deploy an app to something.azurewebsites.net where numerous customers share the .azurewebsites.net domain, I won't share cookies with apps on the thousands of other .azurewebsites.net domains. You can find this list at https://publicsuffix.org/list/public_suffix_list.dat. Strangely enough, sharepoint.com isn't on the list.

All major browsers implement rules to ensure that the list is respected. If they didn't, it would be total mayhem and anyone could read/write each other's cookies.

However, you should always limit the cookie's visibility and usage as much as you can. Generally speaking, you can restrict a cookie to a domain using the domain directive as shown below.

document.cookie = 'domain=value';

For instance, you can set a cookie like this:

document.cookie = cookieName + "=" + cookieValue + ";expires=" + myDate

+ ";domain=.example.com;path=/";

By creating a cookie like that, you'll be able to create a cookie that's shared across all subdomains within the example.com cookie. If you wish to restrict a cookie to a specific subdomain, you specify the exact subdomain you want to target the cookie to.

There's an interesting directive that I haven't talked about, which is the path directive. By using the path directive, you can limit cookie values to a specific path. The path directive doesn't override the domain directive, but by limiting a cookie to a certain path, your entire site doesn't pay the overhead for every other cookie set by every other part of the site.

Another important directive around cookies you should know of is the SameSite directive. Modern browsers restrict a cookie to first-party context by default, unless you, the developer, clearly specify that your cookie must be accessible in a third-party context. This behavior is controllable via the SameSite directive. There are three possible values of the SameSite directive.

The first is “Strict” where the cookie is only sent to the site where it originated. The second is “Lax” where the cookies are sent when the user navigates to the cookie's origin. If you specify a cookie with no SameSite value, it's treated as "SameSite=lax", which means cookies are restricted to first-party context only.

The third possible value of SameSite is “None,” which allows the cookie to be sent to both originating and cross-site requests. None requires you to use HTTPS. If you do wish to share the cookie with third parties, you must specify the cookie as follows:

"SameSite=None; Secure"

This means that cookies that do get shared with third parties must do so over HTTPS. Note that you can use the secure attribute to restrict cookies to secure HTTPS contexts regardless. Here, "SameSite=None" requires you to use HTTPS.

Now you may say that cookies should always work in the first-party context, but not so fast. There are plenty of icky scenarios where cookies track you across the internet, but there are also some legit scenarios where sites need to share data among each other. A common scenario is when you embed one site in an iFrame on another site, and the parent and child are on different sites, for instance, when you embed a YouTube video on another site.

Another common scenario that's far more common is an identity service, where a POST request from the IDP to the relying party wishes to set a cookie as a part of this flow to keep the user signed in. But without third-party cookie access, this flow breaks.

It's worth mentioning that we went through a transition period when different browsers treated SameSite cookies differently, and it caused utter chaos for developers who required legit scenarios for SameSite behaviors. There's a known list of browsers that don't understand SameSite=None here https://www.chromium.org/updates/same-site/incompatible-clients/ and it's a good idea to write conditional code around those browser versions.

Finally, for security reasons, you can restrict a cookie to the server-side only by using the httpOnly=true directive. You want to use this technique for sensitive cookies because even if the client-side JavaScript is compromised, a server-side cookie can't be forwarded to an evil location.

Summary

I find it quite amazing how insecure the web was just a few years ago. It's a shame that the entire industry has been driven by features and has been so reactionary to basic needs such as security. Although I see plenty of investment and forethought into security now, it still isn't enough. Let's be honest, the average user is helpless when it comes to internet security. Most sites still use passwords. Most applications aren't securely written. There's mountains of code in production and in use today, even powering some of our most critical functions, that's pretty much guaranteed insecure.

This keeps me up at night. And it's why I feel the field of computer security will become ever more important as organizations, businesses, and countries realize what kind of competitive disadvantage they have by having insecure operating systems.

There have also been some very poor attempts to solve this problem. A good example is the cookie accept dialog that plagues every site on the internet because of GDPR. Although well intentioned, this is what you get when people unfamiliar with technology start authoring one-size-fits-all laws that change at a glacial pace vs. the internet. I understand that they had to do something, but has that meaningfully improved the state of computer security and user privacy on the internet? It has sure made the experience a lot worse, and users just hit OKAY on the dialog to get rid of the nuisance.

Let's do our part as developers by understanding and keeping up to date with the state of computer security on the internet. Although we can't make the picture better overnight, we can at least stop adding to this global technical debt.

Write secure code, and I'll see you next time!